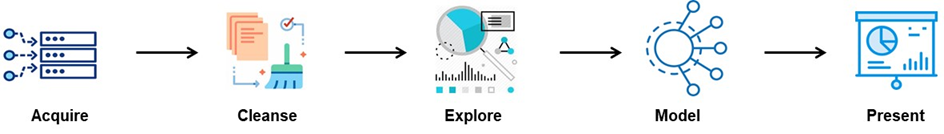

Data Science Pipeline Overview

Data Science pipeline involves gathering data from different sources, preparing data for building models, creating the models, and presenting the findings. Typically, the pipeline starts with the intent to answer a specific analysis question. The specific activities within the Acquire and Cleanse steps might differ depending on whether these steps are part of providing the data to answer multiple analysis questions or a single question.

This pipeline should not be confused with the “production data pipeline,” where a model is executed real-time, near-real-time, or batch. In production, the model could be run on the data coming from various channels, and the model could also be invoked using a REST API.

Acquire: The key to acquiring “relevant” data involves having a proper understanding of the question we are trying to answer and having enough knowledge about the data. Depending on the question we are trying to answer, the data could be structured, semi-structured, or unstructured.

Cleanse: The cleansing process involves things such as profiling to understand different data quality issues we have, for instance, removing unnecessary attributes, unnecessary data, deduplication, resolving data “completeness” issues, etc. This is an essential step in the pipeline as the machine learning models’ accuracy heavily depends on the data. If the pipeline is generic to serve the data for multiple data science questions/analytics workloads, then it is recommended to keep the data atomic (column and row-level) as much as possible as the data manipulation, feature selection, and engineering can be further carried out for a specific model later. It is strongly recommended to have self-explanatory column names.

Explore: This part involves understanding the data and patterns before going on to build a model. This step is used to understand the importance of different variables, the relationship between different variables, understanding the data distributions, and forming hypotheses. The activities within this step depend heavily on the data science question. This analysis helps in dropping irrelevant data. This analysis also helps in breaking an ambiguous question into multiple specific analysis questions and collecting additional data.

Model: This part involves creating statistical or machine learning models for prediction or prescriptive analysis. The outcome of this process is making inferences, predicting a future event, or providing the “cause” for an event. Although all other steps are integral parts, this is the meat of the data science pipeline. The Model layer has multiple steps, usually iterative in nature, to build an accurate model (choosing an algorithm, choosing the training and testing datasets, and tuning the model for accuracy).

Present: The findings from the model need to be presented, in most cases, to a non-technical audience. This is in the form of summary writeups, charts, PowerPoints, etc. The presentation includes things such as the process used (in non-technical terms) to arrive at a certain conclusion, the tools used, assumptions made, patterns found, Description, Prediction, and/or Prescription. This exercise is usually part of the “development” phase of a machine learning project or answering a data science question that does not need to be “operationalized.”

Pitfalls to Avoid

Analysis & Modeling

- Avoid wrong interpretation of a pattern by checking for confounders and alternative explanations.

- Do not spend too much time on the look and feel of the graphics in the exploratory analysis. The speed of analysis is more important during an exploratory analysis.

- Avoid missing values from your data. This has a significant impact on the results of your analysis. Check the distribution of missing values.

- Do not perform confirmatory analysis on the dataset used for exploratory analysis

- Do not report any conclusions/estimates without a measure of uncertainty. Remember: we are “estimating,” not reporting the facts.

- Avoid “causation creep”: Strong correlation between two variables does not suggest causation.

- Do not interpret the outcome of exploratory analysis as inferential or predictive. This usually happens when a model is built and tested on the same sample.

- Perform inferential analysis on bigger data samples as much as possible.

- Do not test your model against a small dataset when performing predictive analysis.

- Avoid “overfitting”: Ensure that the model is trained and tested on a lesser number of features possible. A model that is dependent on many features within the training dataset leads to “overfitting.” There are different techniques to avoid overfitting, which are not discussed in this blog.

Data Science Project Guidelines

Understand the problem or the question we are trying to answer first.

- Breakdown the question (sometimes ambiguous) into a refined and specific “analysis” question(s).

- This involves exploratory data analysis, data profiling, talking to SMEs (domain experts), and data experts.

Build a good quality Data Engineering Pipeline

- Build a high-quality data pipeline first to deliver the input data required for the data scientist so that he/she can focus on model building

- Data Quality: Ensure the data is cleansed and standardized. Poor data quality leads to wrong results and conclusions.

- Data Format: Ensure the final dataset delivered (for the analysis) is in the same platform and format so that the data scientist can focus on model-building rather than wasting time in reformatting again.

- Clearly understand the objective and then build the pipeline. Only prepare the required data for analysis (unless the goal is to find out general patterns and mine the data for finding business opportunities, etc.).

- Don’t start drawing inferences and predicting before a solid exploratory and confirmatory analysis has been completed.

Sampling

- The sample should represent the population.

- Choose an appropriate sampling technique based on the need (stratified sampling, random sampling, systematic sampling, etc.).

- Perform resampling to estimate the precision/uncertainty of the population parameter and validate the model.

- Ultimately, the model should accurately describe the population.

- Checklists (data analysis has a process; it is not an “innovative” R&D activity). Remember, it is an art and a science.

Evaluate Machine Learning Models

- Machine learning models need to be evaluated for their accuracy and may involve multiple iterations of optimizing them.

- Do not rely on just one method to evaluate the model.

- Refine your models based on the changes in your production data.

- Score and monitor model performance in production as well.

Tell a compelling story. It should include:

- Details of the business problem and questions we are trying to answer.

- The process followed, the data used, and cleansing performed.

- Describe the “Findings” using statistics and models via impressive visualization.

Reproducibility

- Reproducibility helps create consistent results.

- Scripting: Save your scripts with detailed comments in them (even those scripts that we use for analysis and discovery). This helps in understanding the whole process that was followed and the basis for coming to certain conclusions.

- Saved Datasets: The datasets used for the analysis need to be saved. This helps in rerunning and reproducing the model output the way it was done initially. Otherwise, when the dataset changes (if we don’t save it), the model may produce different results compared to the initial run.

- Document/save details of the parameters used for tuning and the method used to select the parameters.

- Save all the visualizations used in the process.

- Make note of infrastructure details and environment used.

Operationalizing

- Automate the code.

- Use checklists and perform code reviews, just like any other coding project.

- Use production datasets to train the model.

Summary

Data science is an art and a science, which involves finding patterns in the data, predicting future outcomes, and prescribing actions. Creating a data pipeline to provide good quality data as the input for data science activity is crucial to building accurate models.

If you have questions about data science, data engineering, machine learning, artificial intelligence (AI), need assistance building an architectural foundation for your data science projects, or implementing an analytics solution, please engage with us via comments on this blog post or reach out to us here.