FMCG ETL SOLUTION

ORGANIZATION

Our client, a leading FMCG company operating in over 50 countries and serving consumers globally, required a thorough analysis of customer behavior in the Asian region to enhance the efficiency and effectiveness of campaigns, targeted marketing, and product design. However, poor-quality consumer data and the absence of standardized data collection and validation processes in the Asian region posed significant challenges for customer segmentation and future product strategies.

CHALLENGE

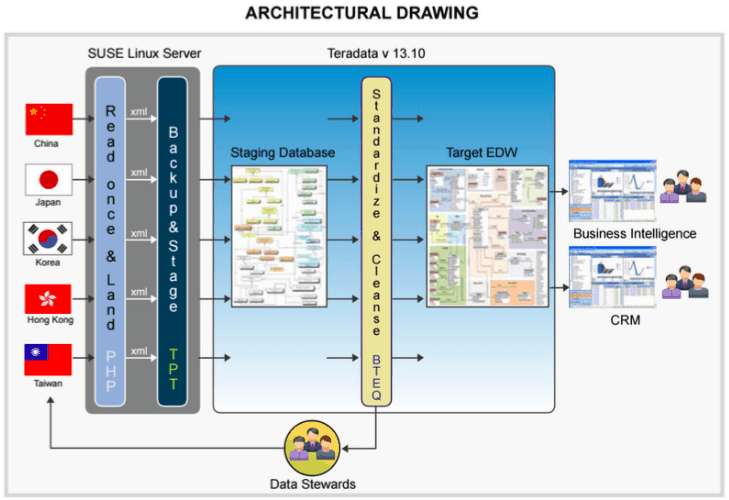

Consumer data from various Asian countries was collected by third-party agencies and provided to the client on request. This data originated from heterogeneous sources, including countries such as Japan, Korea, China, Hong Kong, and Taiwan; diverse channels, such as websites, online retail, surveys, and other touchpoints; and various database platforms, including Oracle, SQL Server, and MySQL.

Validating, cleansing, and matching this data became increasingly challenging due to the lack of standardized processes for data collection, standardization, and cleansing. As a result, data inconsistencies hindered the effective utilization of CRM and BI applications.

The client required a data standardization and validation process along with an automated solution to extract and load consumer data from various sources into a centralized warehouse, called 1-Consumer Place, to provide a 360-degree consumer view.

SOLUTION

XTIVIA Developed a Governance Framework That Facilitated:

- Registration and configuration of third-party data sources with the client.

- Client approval process for data sources.

- Data profiling and validation.

- Mapping data sources to target warehouse data elements.

XTIVIA Architected an ELT Solution That Includes:

- Landing Source Data:

- Extracting data from heterogeneous sources using PHP and outputting it in XML format on the ETL server.

- Automated backup of landed source data for regulatory compliance.

- Staging and Transformation:

- Loading XML data into a staging database using Teradata Parallel Transporter.

- Data cleansing and standardization are performed using BTEQ and SQL scripts.

- Sending rejected data from the cleansing process to data stewards for review and correction.

- Data Loading and Compliance:

- Loading cleansed data into the target data model.

- Ensuring data sources are read only once to minimize the impact on the source systems.

- Storing data from Oracle, SQL Server, and MySQL in XML format for backup and compliance.

RESULTS

- Single View of the Customer: Provided a unified customer view for different groups, including marketing and new product development.

- Earlier Availability of Accurate Data: Enabled faster access to reliable data for CRM and BI applications.

- Improved Campaign Success: Enhanced customer reach and campaign effectiveness by leveraging accurate behavioral data.

- Effective Targeted Marketing: Boosted the precision of marketing efforts, leading to better engagement.

- Informed Product Design Decisions: Facilitated data-driven decisions for product design, aligning with customer behavior and preferences.

KEYWORDS

FMCG, product design, consumer data, data collection, data validation, customer segmentation, product strategies, heterogeneous data sources, websites, online retail, surveys, CRM applications, BI applications, data standardization, data cleansing, data matching, automated solution, centralized warehouse, 1-Consumer Place, 360-degree consumer view, governance framework, data profiling, ELT solution, PHP, XML format, ETL server, Teradata Parallel Transporter, BTEQ, SQL scripts, data stewards, data model, regulatory compliance.

SOFTWARE

Teradata® 13.10 TPT 13.10 Oracle® 10g MySQL™ 5.0 SQL Server® 2008 SUSE™ Linux

Let's Talk Today!

No obligation, no pressure. We're easy to talk with and you might be surprised at how much you can learn about your project by speaking with our experts.

XTIVIA CORPORATE OFFICE

304 South 8th Street, Suite 201

Colorado Springs, CO 80905 USA

Additional offices in New York, New Jersey, Texas, Virginia, and Hyderabad, India.

USA toll-free: 888-685-3101, ext. 2

International: +1 719-685-3100, ext. 2

Fax: +1 719-685-3400

XTIVIA needs the contact information you provide to us to contact you about our products and services. You may unsubscribe from these communications at anytime, read our Privacy Policy here.