As cloud infrastructure has become more and more critical to businesses, both large and small, various tools have sprung up to help companies manage their rapidly expanding cloud footprint. One of the most essential cloud tools developed in the last ten years has been HashiCorp’s Terraform. If you are managing any sort of cloud implementation, chances are that you are leveraging Terraform in some way… but are you doing so in the right way? In this article, I introduce you to some Terraform best practices from our real-world experiences, but let me start from the beginning.

What is Terraform?

Terraform is a tool developed by HashiCorp, which provides an abstraction layer for describing and provisioning various types of cloud infrastructure. It works on all major Cloud providers, including AWS, Google Cloud, Azure, IBM Cloud, and even on-premises cloud frameworks like VMWare.

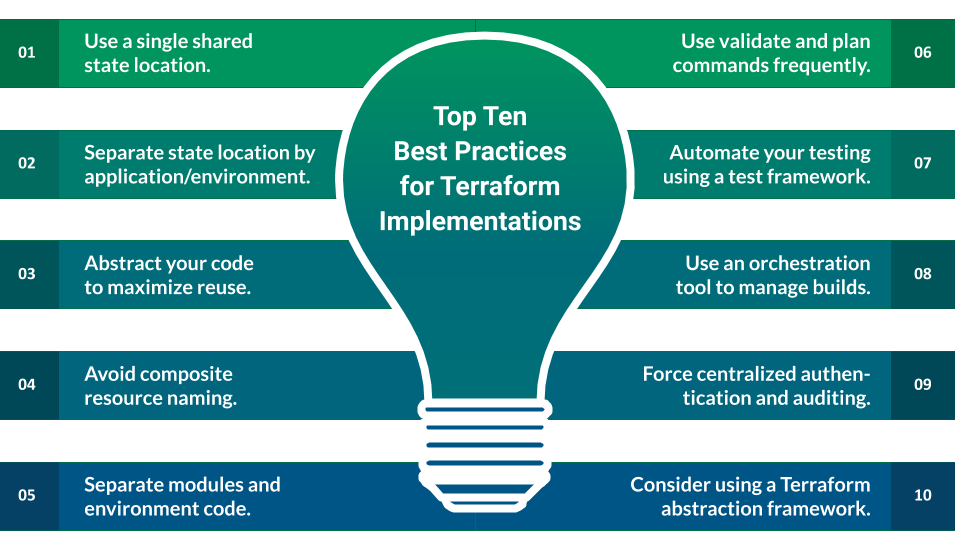

While Terraform is used almost ubiquitously across the cloud infrastructure landscape, there are still many implementations that do not leverage the power of Terraform to its greatest potential. This article is intended to highlight ten best practices for improving your Terraform implementation; adhering to these recommendations will vastly improve your Terraform projects, both from an overall quality standpoint as well as a speed of implementation standpoint.

Terraform Best Practice #1: Always set up a shared state location.

Terraform uses an intermediary persistent data store to maintain a representation of your cloud infrastructure state; it creates this data store when it is first run against a cloud infrastructure account, and it maintains it throughout subsequent invocations of the Terraform tools. The reason for this is that your cloud infrastructure account cannot maintain a complete and accurate view of what changes have been made to it over time; Terraform state basically exists to allow Terraform to determine what resources have changed and what changes need to be applied to make your cloud infrastructure match your Terraform code. Several backend services are available for storing Terraform state, including a local file system, a shared file system, a shared object store location, or even a relational database. Whichever one you choose, it is considered a best practice to use a single common backend location, regardless of the number of developers working on your infrastructure code. It is also critical that you leverage one of the various locking mechanisms available to make certain that only one infrastructure provisioning operation is in flight at any given time. Having multiple copies of your Terraform state will inevitably lead to infrastructure code drift and collisions, and maintaining it in a single shared location even provides you with a secondary benefit. It allows you to enforce security and authorization restrictions on who can update your infrastructure and how they can do it.

Terraform Best Practice #2: Use separate state locations based on logical environment boundaries.

Terraform is not opinionated about how you structure your infrastructure code. It allows you to implement anything from a single megalithic structure that describes all of your cloud resources across multiple environments to small, atomic components that are provisioned and tracked individually. As with most systems of this nature, the proper level of decomposition is somewhere between the two extremes; it is considered a best practice to group related resources by environment or application and maintain a separate Terraform state location for each grouping. Practically, this will mean that the number of state locations will proliferate, but maintaining a strict logical separation between environments and applications improves the tool’s performance and prevents conflicts from arising.

Terraform Best Practice #3: Decompose and abstract your terraform code to maximize reuse.

Like any other coding framework, Terraform code can be written poorly or written well; poorly written infrastructure code is slow, buggy, and difficult to maintain. Many of the tenets underlying good application code also apply to infrastructure code; concepts like DRY, the Single Responsibility Principle, and YAGNI all apply to Terraform code just like they would traditional application code. To facilitate good design, Terraform allows you to break your infrastructure down into modules; atomic infrastructure components that have well-defined inputs and outputs. You can use these modules to create reusable components tailored to your application implementation, then use those components to create a representation of your infrastructure that is reusable and maintainable. As an example, you might have an application that uses an S3 bucket, an RDS instance, and an EC2 instance; an appropriate representation of this in Terraform would be to create separate modules representing the S3 bucket, the RDS instance, and the EC2 instance. Then create another module that leverages each of these modules to represent the application; that final module would be referenced in your actual environment provisioning logic. Using a multi-level representation like this allows you to reuse the basic resource modules for other applications and the fully-composed application modules for multiple environments.

Terraform Best Practice #4: Be wary of programmatically generating resource names.

One pitfall that almost everyone encounters at least once as they’re working with Terraform is the problem of dynamic resource naming. It’s very tempting to use an algorithmic approach to generating your cloud resource names, and it would seem to make sense to do so at the lowest level. For example, you might try to use variables such as environment name, application name, and region to name a fleet of compute instances dynamically. The problem that this generates is what happens if you ever need to change that algorithm. Remember, Terraform is only loosely coupled to your cloud infrastructure; since the underlying platform does not maintain consistent information about what has changed when it comes to provisioned resources, it is not possible for Terraform to correctly interpolate when a resource’s name changes. If you are dynamically generating resource names using the form $application_$environment_$region_$count, and you find that you need to change the format to $application_$environment_$region_$availabilityzone_$count, Terraform will no longer track the infrastructure that was created using the old naming convention. At that point, you will either need to manually rename all of your compute instances to match the new naming convention or destroy them all and allow Terraform to create a new set. This is especially insidious when the dynamic naming is handled in a very low-level module reused by several applications across the enterprise; a change like this can necessitate large-scale updates to applications across the infrastructure.

To work around this, if you require dynamic naming, make sure that you handle the naming logic in the composite application module rather than at the low-level resource module level, passing in the entire resource name to the low-level module. This way, if the naming convention ever does change, you can manage the logic needed to handle both old and new infrastructure at the application level.

Terraform Best Practice #5: Keep your modules and your environment implementation code separate.

This ties into best practice #3 and best practice #2; not only should you separate your logic into modules and maintain a separate state per environment and application, but you should also break up terraform module code and terraform provisioning code into separate locations. In general, we do this by maintaining modules in their own Git repository and maintaining a separate Git repository for each environment that we need to provision. This facilitates reuse and collaboration by allowing you to maintain your module library separately from your environment-specific code.

Terraform Best Practice #6: Maintain a strict policy of reviewing terraform validate and plan outputs before allowing terraform changes to be applied to an environment.

This is a best practice that seems fairly straightforward, but it is oftentimes overlooked for the sake of expediency. The terraform tool provides a number of actions that you can execute against your infrastructure code; two that are critical to a successful Terraform implementation are validate and plan. The terraform validate action will basically check the syntax of your terraform code to make certain that it is syntactically valid; it will not compare it to the current state, nor will it list what resources will be created or modified by the code. This action should be used by infrastructure developers on a regular basis to verify that their Terraform code’s syntax is correct. The terraform plan action takes your terraform code, examines your environment’s remote state, and lists out what changes will be applied when executing it against your remote environment. Executing and reviewing this command’s output is a critical step for an infrastructure-as-code pipeline; this command will give you a chance to catch and resolve any issues in the code before you modify your cloud environment.

Terraform Best Practice #7: Use an automated testing framework to write unit and functional tests that validate your terraform modules.

Automated testing is every bit as important for writing infrastructure code as it is for writing application code. As Terraform has gained in popularity, many options for testing terraform code have become available. At XTIVIA, we most often leverage Terratest for this purpose; this framework allows you to write tests in Go that execute your infrastructure code against a sandbox cloud platform and then validate that the results are as expected. Note that this should be used in addition to a Terraform linter, or the use of terraform validate to check your code syntax as discussed in best practice #6. Another option for automated Terraform validation is to use the Kitchen-Terraform set of Test Kitchen plugins; this path might be a bit easier for any shop that is more familiar with Ruby, Test Kitchen, and InSpec.

Terraform Best Practice #8: Require a uniform authentication scheme and auditing mechanism that clearly tracks which principal triggered a terraform operation, particularly in production environments.

This is a best practice that can be handled at multiple levels of an infrastructure-as-code implementation; if individual engineers are triggering terraform against your cloud environment directly, it should be handled at the authentication and authorization level for the target cloud platform. If you are leveraging a shared continuous integration tool such as Jenkins for executing terraform code, then this should be handled in the CI/CD tool. This best practice is intended to ensure that your Terraform pipeline maintains discrete access control and auditing information for each user who can access the system, and that it also records information about each Terraform execution. This should include what user triggered an update to your cloud infrastructure when it was triggered, and what changes were applied by each discrete execution.

Terraform Best Practice #9: Use a Continuous Delivery / Continuous Integration or shared orchestration tool to execute your terraform operations from a single common location.

Continuous integration (CI) and continuous delivery (CD) are natural fits for infrastructure-as-code projects; leveraging a centralized CI/CD tool to execute your terraform commands against your environment provides you with a simple way to ensure that you are following several of the best practices in this document, including best practices #1, #6, and #8. In addition, if you are using a cloud vendor’s source control and continuous integration facilities (i.e., AWS CodeCommit/CodeBuild or Azure Repos/Pipelines), there are often facilities built into the tool which will help you secure and execute your Terraform code to create resources in your environment. One example is AWS CodeBuild; when running Terraform using CodeBuild, you can leverage the IAM role assigned to your CodeBuild instance to obviate the need for separately managed and maintained IAM credentials, improving overall system security. Using these types of tools allows you to compose a multi-stage infrastructure build process that incorporates automated testing, validation, code reviews, and a consistent execution platform to minimize the risk of issues cropping up in your infrastructure management process.

Terraform Best Practice #10: Consider using a separate abstraction layer to facilitate reuse and abstraction.

Many of these best practices have already been incorporated into pre-existing frameworks that exist on top of Terraform; two that we at XTIVIA have worked with extensively are Terragrunt and Runway. These tools provide opinionated structures and processes that streamline the process of designing reusable, consistent Terraform code following best practices. They allow you to get started quickly, ensuring that your project is leveraging the Terraform tool optimally for performance and reuse. We strongly recommend using one of these frameworks to reduce the learning curve for well-designed Terraform implementations.

Terraform Best Practices Summary

Terraform is an extremely flexible tool for managing cloud infrastructure; however, that flexibility comes with a cost in terms of the complexity of an average Terraform implementation. Following these ten best practices can help you avoid pitfalls in Terraform usage and smooth your path to a fully automated infrastructure-as-code pipeline.

If you have questions on how you can best leverage Terraform, or other cloud infrastructure tools and/or need help with your cloud infrastructure project, please engage with us via comments on this blog post, or reach out to us here.