Initial triaging steps

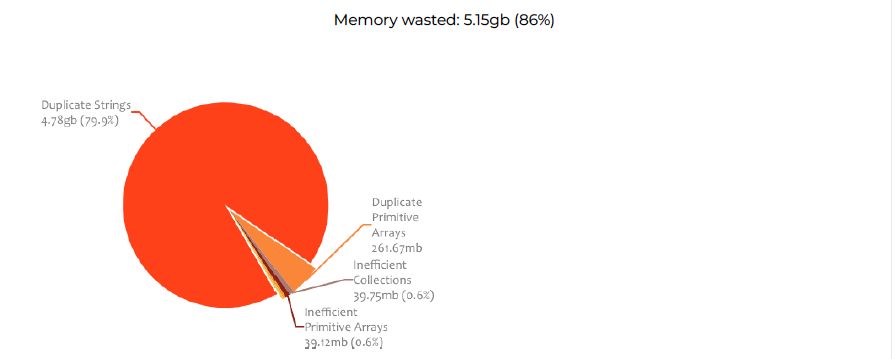

The Systems Engineering team at XTIVIA took thread dumps, heap dumps, and started analysis of Garbage collection logs to get an overview of the JVM health.

Thread dump analysis of the Liferay Server 02 showed a number of blocked threads that are causing high CPU. All the threads are for SASS Compilation.

com.liferay.portal.scripting.ruby.RubyExecutor-1022 - priority:10 - threadId:0x00007f7a2810a000 - nativeId:0x4a0f - state:BLOCKED

stackTrace:

java.lang.Thread.State: BLOCKED (on object monitor)

at org.jruby.javasupport.JavaClass.createJavaClass(JavaClass.java:1145)

- waiting to lock <0x00000005e4fc2e90>From the analysis of heap dumps, the objects that are occupying heap belong to

com.liferay.saml.model.impl.SamlSpSessionImpl._assertionXml

Major Breakthrough

The Systems Engineering team dove into data captured by the Riverbed SteelCentral AppInternals APM tool. On drilling down into the login transactions, the APM tool lists the queries that were taking a long time to execute based on the logins.

They came across a user account that is logging into the environment once every three minutes. The team discovered that, on the rogue user login, the user is pulling non-cached DB records from the database and the database query is creating > 20,000 objects in the JVM for every login.

select samlspsess0_.samlSpSessionId AS samlSpSe1_?_, samlspsess0_.companyId AS companyId123_, samlspsess0_.userId AS userId123_, samlspsess0_.userName AS userName123_, samlspsess0_.createDate AS createDate123_, samlspsess0_.modifiedDate AS modified6_?_, samlspsess0_.samlSpSessionKey AS samlSpSe7_?_, samlspsess0_.assertionXml AS assertio8_?_, samlspsess0_.jSessionId AS jSessionId123_, samlspsess0_.nameIdFormat AS nameIdF10_?_, samlspsess0_.nameIdNameQualifier AS nameIdN11_?_, samlspsess0_.nameIdSPNameQualifier AS nameIdS12_?_, samlspsess0_.nameIdValue AS nameIdV13_?_, samlspsess0_.sessionIndex AS session14_?_, samlspsess0_.terminated_ AS terminated15_?_ FROM SamlSpSession samlspsess0_ WHERE (samlspsess0_.nameIdValue=? )Root cause

- There was a rogue user account that was trying to login to one of the Production websites every 3 minutes in a scheduled manner.

- On every user login, Liferay creates an entry in the database and queries the database to fetch all the successful logged-in records from the database.

- Liferay database is not indexed properly for reading the SAML SP records. The rogue user has created around 20K records which were read on every login from the user with the rogue account.

- Due to the size of the record set returned for every login from this rogue user account, the Liferay database was not responding to any other requests while reading the records from the database table. This resulted in blocked threads in the JVM that needed access to the database. Due to the blocked threads, the objects in the JVM were marked as active preventing them from being cleared out by the garbage collector. As the number of active objects grew in number, this caused heap exhaustion resulting in “

Out of Memory: Heap space” issue causing the server downtime.

Further fixes

The findings were shared with the development team, along with the following recommendations, to prevent further issues with performance in the future.

- Code has to be rewritten to expire objects or to catch and prevent rogue user logins from happening again.

- Customer web content has to be updated to replace all SASS with precompiled CSS.

- Try to find a permanent solution for the SAML SP session table records recording all the logins and try a way to archive it

Summary

For a complex Portal application like Liferay, there could be issues that are not foreseen until they actually manifest. Due to the complexity and extensibility, an issue can only be excited only under certain conditions. Addition of an Application Performance Management solution like Riverbed can help determine the root cause and can help stabilize the environments.

If you have questions on how you can best leverage our expertise and/or need help with your Liferay DXP implementation, please engage with comments on this blog post, or reach out to us.