Clustering Solr 4.x

Summary

The recommended method for clustering Solr versions 4 and above is by leveraging the product’s new SolrCloud distributed capabilities to manage horizontal instance scaling.

SolrCloud provides the following advanced features:

- Automatic distribution of index updates to the appropriate shard

- Distribution of search requests across multiple shards

- Assignment of replicas to shards when replicas are available.

- Near Real Time searching is supported, and if configured, documents are available after a “soft” commit.

- Indexing accesses your sharding schema automatically.

- Replication is automatic for backup purposes.

- Recovery is robust and automatic.

- ZooKeeper serves as a repository for cluster state.

Minimum Requirements

The following are the minimum requirements for SolrCloud configuration in a production environment:

ZooKeeper: 3 instances.It is recommended that the zookeeper instances be on stand alone servers. Two zookeeper instances can be on the servers where Solr is installed. The third can be a separate stand-alone instance.

Solr instances: 2 (this is the minimum). One Solr instance will be master and the second instance will be considered a replica.

Shards: 1 shard is required as the minimum. A shard is a logical piece (or slice) of a collection. Each shard is made up of one or more replicas. An election is held to determine which replica is the leader.

Implementation Details

ZooKeeper Deployment

The following steps have to be completed on each of the servers where zookeeper is installed.

- Download stand alone zookeeper and extract it to /opt/liferay/zookeeper directory. This will be zookeeper_install_dir.

- Change directory to zookeeper_install_dir/conf.

- Copy zoo_sample.cfg to zoo.cfg

- Open zoo.cfg and modify configuration as appropriate:

For details on the configuration options available, refer to the ZooKeeper documentation:

http://zookeeper.apache.org/doc/r3.3.3/zookeeperAdmin.html

5. Switch to the zookeeper_install_dir/data directory and create a myid file with the value of the zooKeeper instance ID number; assign different ids to the zookeeper servers

For example, on the first Solr instance:

root@solr1$ cat 1 > /opt/zookeeper/data/myid6. Start zookeeper on all the three servers

root@solr1$ cd /zookeeper_install_dir

root@solr1$ ./bin/zkServer.sh startIntegrating Solr with ZooKeeper

1. Create an Apache Tomcat context file on each of the Solr instances in the ${CATALINA_BASE}/conf/Catalina/localhost directory. The name of the file should match the deployed application’s name (for an instance where the deployed Solr instance directory in Tomcat’s webapps directory is “solr”, the name of the file should be “solr.xml”)

...

<Context path="/solr/home"

docBase="/opt/liferay/tomcat-instances/solr-01/docBase/solr.war"

allowlinking="true"

crosscontext="true"

debug="0"

antiResourceLocking="false"

privileged="true">

<Environment name="solr/home" override="true" type="java.lang.String" value="/opt/liferay/solr-home/solr-01" />

></Context>2. Create a docBase directory under Solr ${CATALINA_BASE} and copy solr.war to that directory.

3. On all Solr instances, create the ${SOLR_HOME}/solr.xml file for shard1 and shard 2 respectively as described below:

...

<solr persistent="true" zkHost="solr1:2181,solr2:2181,solr3:2181">

<cores defaultCoreName="configuration1" adminPath="/admin/cores" zkClientTimeout="${zkClientTimeout:15000}" hostPort="8180" hostContext="solr">

<core loadOnStartup="true" shard="shard1" instanceDir="/opt/solr/solr-home/solr-01/collection1/" transient="false" name="configuration1"/>

</cores>

</solr>For example, if you have two shards in your cluster and if you want a Solr instance to belong to the second shard, you can change the value of shard=”shard2”.

Upload Solr config to ZooKeeper:

Solr configuration has to be uploaded to Zookeeper for clustering to work.

The most convenient way to do this would be to add the following to the ${CATALINA_BASE}/bin/setenv.sh for Solr server.

CATALINA_OPTS="${CATALINA_OPTS} -Dsolr.solr.home=/opt/liferay/solr-home/solr-01 -Dsolr.data.dir=/opt/liferay/solr-home/solr-01/collection1/data -DzkHost=localhost:2181 -Dbootstrap_confdir=/opt/liferay/solr-home/solr-01/collection1/conf/"The following is optional. Do this only if you require more than one shard.

Add the number of shards in ${CATALINA_BASE}/bin/setenv.sh.

-DnumShards=2Now startup both Solr tomcat instances using the startup script. Solr logs would indicate Solr instances trying to connect to the ZooKeeper instances as expected.

Validate SolrCloud configuration

Switch to zookeeper_install_dir/bin and execute the following

./zkCli.sh -server localhost:2181Once you are connected, execute the following:

[zk: localhost:2181(CONNECTED) 3] get /configs/configuration1/schema.xmlThe schema.xml should match with your ${SOLR_HOME}/collection1/conf/schema.xml.

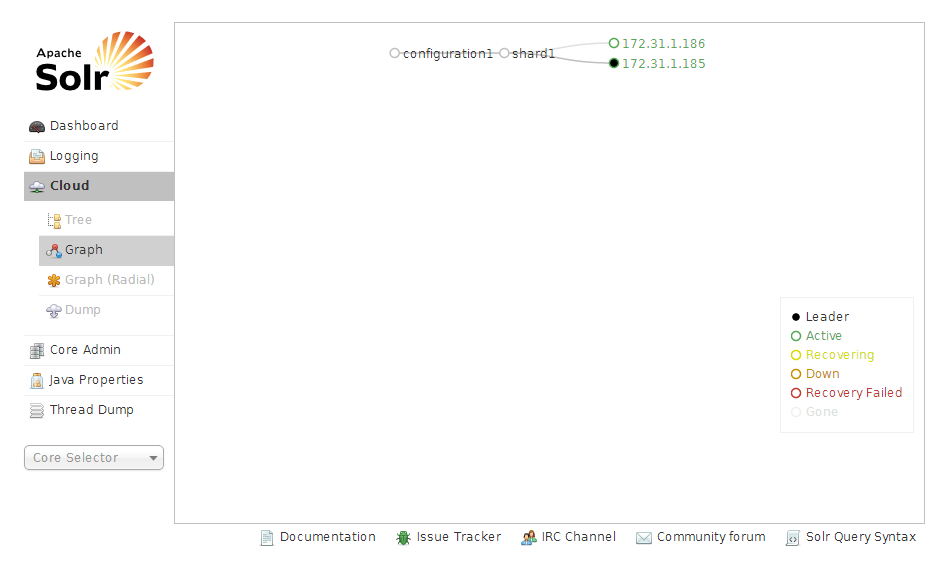

Once the Solr instances are up and running, access the admin console by hitting http://${TOMCAT_IP_ADDRESS}:${TOMCAT_PORT}/solr and click on “Cloud” in the left navigation; you will be presented with a screen that looks similar to the following:

This graph will show the status of all Solr instances that belong to a cluster and their status. At any given time, the first Solr instance that’s added to the cluster is the master. Any additional instances added to the shard will be added as replicas. When the master instance goes down, one of the replicas is promoted to be the master to that shard.

Integrate Liferay with Solr

Step 1 Download solr-web plugin from Liferay Marketplace, install it on Liferay instances and restart the servers.

Step 2 Once the webapp is installed successfully, switch to the Liferay ${CATAINA_BASE}/webapps/solr-web/WEB-INF/classess/META-INF/

Open the the solr-spring.xml file in an editor and add the following configuration.

...

<bean id="com.liferay.portal.search.solr.server.SolrServerFactoryImpl" class="com.liferay.portal.search.solr.server.SolrServerFactoryImpl">

<constructor-arg>

<map>

<entry>

<key><value>solr-01</value></key>

<bean class="com.liferay.portal.search.solr.server.BasicAuthSolrServer">

<constructor-arg type="java.lang.String" value="http://solr1:8180/solr" />

</bean>

</entry>

...

</map>

</constructor-arg>

<property name="solrServerSelector" ref="com.liferay.portal.search.solr.server.LoadBalancedSolrServerSelector"/>

</bean>Restart Liferay instances. Once Liferay instances are up and running, any indexing requests that reach either Solr instance are replicated on the other instance by the zookeeper.

For more information, please contact us with any questions.